Briefing Creator – an adventure in Google Apps Script

Background

When I returned to Springer Nature in October 2018 it was to take over front-end dev responsibilities on Nature Briefing from another contractor who was moving onto another gig. During the week of handover, they told me all about Briefing while they frantically tried to finish the documentation and to fix the tests. Standard handover, nothing out of the ordinary.

Nature Briefing is a daily email to over a quarter of a million subscribers containing handpicked and curated content plucked from Nature.com and other science sites and journals. When I arrived the editors were writing this collaboratively (and remotely) in a Google Document, and then once complete, one of their team would take the content and manually copy and paste it into ~25 different templated components (e.g. article, full-width image with caption, quote of the day, etc.), and then compile the whole kaboodle into one outer template, in Dreamweaver. This manual process they had to do every weekday, and it took about an hour (twice on Fridays, as there is a weekly Briefing as well as the daily). My predecessor said that there had been an idea about investigating Google Apps Script as a way of perhaps streamlining this process, and would I like to start investigating that while they tried to fix the tests?

Yes, actually, I would. That sounded pretty interesting. Little did I know quite how much Regular Expressions I was letting myself in for.

The Premise

I wanted to find a way to remove the manual compilation step completely, so that the editors would be able to collaborate on the writing of the content, then push a button and have the compiled HTML generated for them. I also wanted the HTML itself to be retrieved from GitHub each time, so that changes made to the templates and components could be made by the project team and the editorial team need not store copies of the HTML locally, which could easily go out of date.

The Solution

To automate something and get consistent output you must have consistent input. And so to make sure that each component was output correctly, I had to first standardise the input, which meant going through each component and codifying exactly which parts were user-generated (and also what format they were, and whether they were optional). I did this in a spreadsheet and checked it through with the editorial team, then took each component and replaced any dummy content with a placeholder of my own design which my code could then hook onto and replace with the editorial content.

For example, the article component changed from this:

<tr>

<td class="content-block">

<h2><a href="article_link" target="_blank">Headline</a></h2>

<p style="line-height:1.5;">Article_body_text</p>

<span class="content-reference" style="font-size:14px;">

<a href="article_link" target="_blank">Source</a> | X min read

</span>

</td>

</tr>to this:

<tr>

<td class="content-block">

<h2><a href="[[source-url]]" target="_blank">[[headline]]</a></h2>

<p style="line-height:1.5;">[[body-text]]</p>

<span class="content-reference" style="font-size:14px; clear: both;">

[[source(s)-&-reading-time(s)]]<br>

</span>

<span class="content-reference optional-field"

style="font-size:14px; clear: both;">

[[reference(s)]]<br>

</span>

</td>

</tr>The field names are enclosed in a double set of square brackets for the script to be able to find them and replace them with content at compilation time. That lets the script find the fields, but it doesn’t tell the script anything about them. To solve this I added an HTML “front matter” comment to each component containing information about the fields. In the example of the article component, this looks like:

<!--///

[

["Headline", {}],

["Body text", {"rowHeight": 80, "richText": true}],

["Source(s) & reading time(s)", {"richText": true}],

["Reference(s)", {"richText": true, "optional": true}],

]

\\\-->That tells the script that there are four fields (‘source url’ is extracted from the ‘sources & reading times’ field) for user content in this component, three of them are “richText” (meaning that italics, bold, links, etc are allowed), that one of them is optional, and that one of them should have a nice big box for the editors to type in. The script reads the front matter for each component when it loads and stores these fields so that when the editor wants to add a component to the document, it knows which fields to display.

Input…

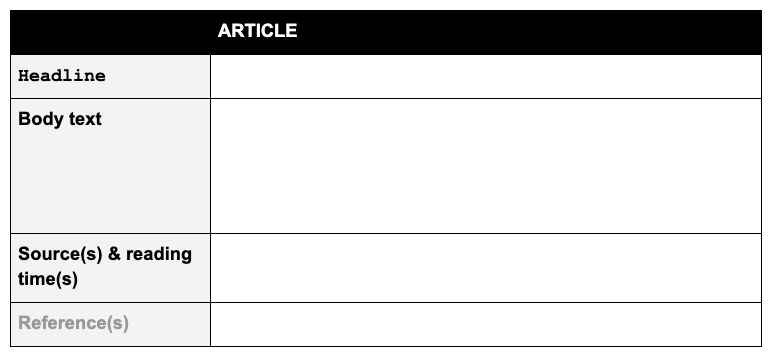

Since I wanted the editors to be presented with a consistent interface to input the content and each component can be reduced to a list of fields, I represented the fields as a table within the Google Doc. The article input table, created from that comment array above, looks like this:

Monospaced field names indicate that the field is text only, sans-serif field names are for “richText” fields. Grey field names indicate optional fields.

Using tables – and only tables – for input also means that the editors can annotate and comment to their hearts’ content outside those tables, and it will all be ignored by the script at compilation time.

…Output

In order to convert the content in the Google Doc to HTML, the script needs to spider through each component table and for each one:

- retrieve the stored HTML for this component type

- walk through the fields in the component table

- replace the placeholder for each field with the entered content

And voila, HTML!

Well, not quite – that would only give you the text entered into each box, not the formatting. I needed to reproduce bold, italics, links, lists and linebreaks in any field where “richText” is allowed. Thankfully Google Script can detect these attributes/elements and therefore they can be reproduced in the HTML. Even more thankfully, someone had done this before. I found two scripts that were extremely helpful for this step on GitHub, from users oazabir and thejimbirch. Plugging this into my creator script now meant that HTML attributes would be added into the output where necessary to reproduce the formatting I needed.

Once that was done all I needed to do was concatenate all the processed components and then insert the glob of HTML into the outer template that holds the frame and all the CSS.

So… voila, HTML?

Pretty much, actually. The searching and replacing in all these files and templates nearly drove me insane (mostly because I hate RegExp almost as much as it hates me – praise be for Regex101) but that aside it was then mostly a case of building a useable UI inside the Google Doc for the editors to use.

This script went into daily use in April 2019 and has been used for every Nature Briefing since.

Issues

There are a few things about this solution that aren’t ideal.

- The big one is that it is a Google Script, and lives on Google Drive, so can’t be kept in GitHub. There are ways around this for some Google Add-on Scripts, but not ones which are “container-bound”, which the Briefing Creator has to be in order to be able to read/write from the Google Doc. One manual (and inevitably unreliable) workaround for this would be to manuallly copy and paste all the files into a repo as a backup, and update that whenever changes are made.

- For the script to be able to retrieve the components and template HTML from GitHub, it needs an access token, which for the first 6 months of use was a token bound to my personal GitHub account. Not ideal, since I’m a contractor and the script is intended to be used beyond my contract here. To get around this we created a Briefing Admin account for the team to maintain going forward, then used that to create a new access token for the script.

- There is also a bug to do with lists (editors like lists, they use them a lot). When the script creates the HTML elements, it can only look at each element in isolation, not at the parent element. This is fine for almost everything, but lists contain list elements, and if the script is looking at a list element it cannot see the list itself, and therefore doesn’t know which list it belongs to, or when to start and finish a list. The workaround for this involves using the internal Google Docs element ID for each list. That’s great, until an editor copies and pastes a list from one place to another, and the internal ID is copied with it. I have not had time to look into a fix for this yet.

- Google Script cannot detect subscript/superscript text. Science journals use those a lot, with all their CO2 and E=MC2 and the like. To get around this the editors must type the actual

<sub></sub>or<sup></sup>tags into the content, since the script doesn’t strip those out. - There is no validation in the script as yet, so if the editor leaves out a non-optional field, there will be no error message and the output may look undesirable. I hope to have time to fix this soon.

All that said, making this script has meant that someone doesn’t have to spend 6 hours a week copying and pasting in Dreamweaver 🎉😃.

Find this post useful, or want to discuss some of the topics?